Windows Setup — Getting Started with WSL2

The Voyager SDK is built for Linux. On Windows, you run it inside Windows Subsystem for Linux (WSL2) — a real Linux environment that runs alongside Windows.

| Feature | Linux | Windows (WSL2) | Windows (Native) |

|---|---|---|---|

| Full SDK development | Yes | Yes | No |

| Network deployment | Yes | Yes | No |

| Inference (AxRuntime) | Yes | Yes | Yes |

For the full SDK experience including model deployment and GStreamer pipelines, use WSL2. Native Windows supports inference only via the AxRuntime API.

WSL2 setup path

Step 1: Install WSL2

Open PowerShell as Administrator and run:

wsl --install -d Ubuntu-22.04

Restart your computer when prompted. After restart, Ubuntu will open and ask you to create a username and password.

If WSL is already installed, check your version: wsl --list --verbose. You need WSL 2 (not 1). Upgrade with wsl --set-version Ubuntu-22.04 2.

Step 2: Install the Windows driver

The Axelera Windows driver is not yet Microsoft-certified (certification is in progress). Until then, it requires testsigning mode to install.

2a. Enable testsigning

Open a Command Prompt as Administrator and run:

bcdedit /set testsigning on

If your system uses BitLocker drive encryption, deactivate BitLocker before running the bcdedit command (and before every future bcdedit change). The command modifies boot configuration, which will trigger BitLocker recovery on next restart if encryption is active.

How to deactivate BitLocker for one reboot:

- Open the Windows search bar, type "BitLocker", select Manage BitLocker

- If BitLocker is active, click Suspend protection and confirm

- BitLocker reactivates automatically after the next reboot — re-suspend before any future

bcdeditchanges

If you skip this and the system enters BitLocker recovery, you will need your BitLocker recovery key to regain access.

Restart your PC. The desktop will show "Test Mode" in the lower-right corner — this confirms testsigning is active.

2b. Download the drivers

Download the driver archives from the Axelera software portal:

- Metis driver (required for all hardware):

MetisDriver-1.3.4.zip - Switchtec PCIe switch driver (required only for the Metis PCIe 4-AIPU card):

switchtec-kmdf-0.7_2019.zip

Extract each archive locally. Each extracted folder contains three files: .cat, .inf, and .sys.

2c. Remove any previous driver version

If this is your first time installing the driver, skip this step — go straight to 2d.

If you already have an older Metis driver installed, remove it completely before installing the new one. Windows can keep multiple drivers for the same device.

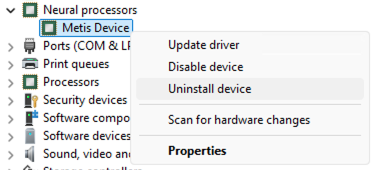

In Device Manager, locate the Metis device under Neural Processors, right-click and select Uninstall device:

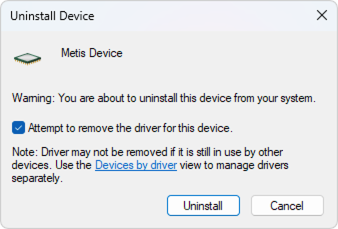

In the confirmation dialog, check "Attempt to remove the driver for this device", then click Uninstall:

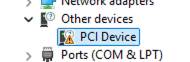

Verify the device now appears under Other devices as a generic PCI Device — this confirms the driver is fully removed:

2d. Install via Device Manager

Open Device Manager (right-click the Windows Start button → Device Manager).

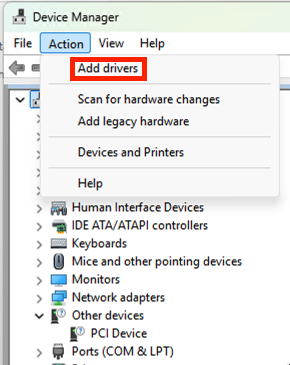

From the menu, open Action → Add Drivers:

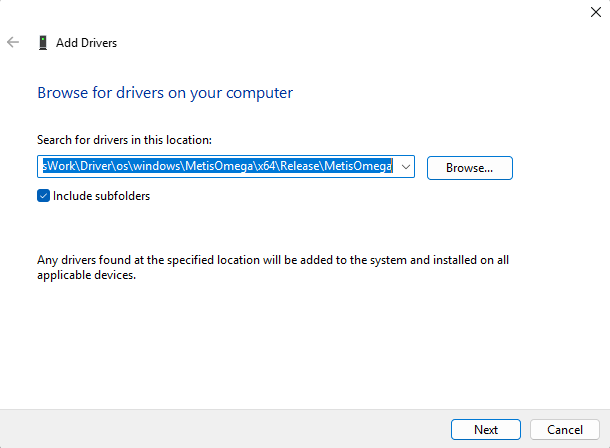

Set the path to the folder where you extracted the Metis driver files:

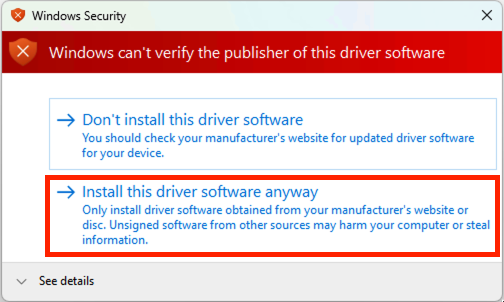

Select Install the driver anyway when prompted:

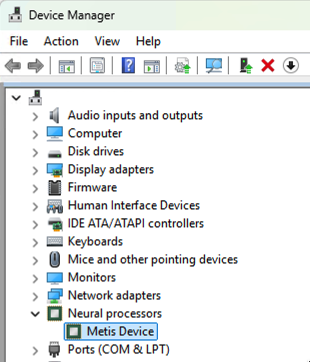

Confirm that the Metis device now appears under Neural Processors:

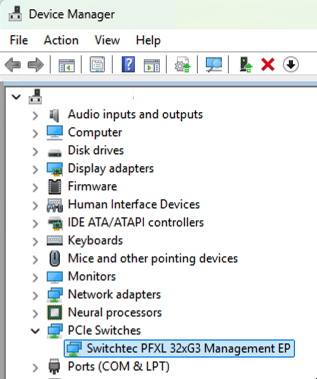

If you have a Metis PCIe 4-AIPU card, repeat the same steps for the Switchtec driver:

Step 3: Enter WSL2 and follow the Linux guide

Open a regular Command Prompt (not PowerShell, not Administrator) and enter WSL:

wsl

You're now in Linux. From here, follow the standard setup:

- Hardware Install — verify your Metis device is detected

- SDK Install — clone, install, and activate

- Verify Setup — confirm everything works

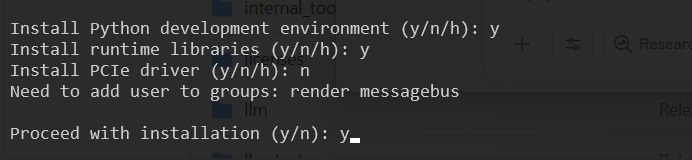

When running install.sh inside WSL, do not select the driver installation option — the driver is installed on the Windows side (Step 2 above), not inside WSL.

Step 4: Where to clone the SDK

Clone the SDK in a Windows-accessible folder so both Windows and WSL can reach it:

# Inside WSL — /mnt/c/ maps to C:\ on Windows

cd /mnt/c/Axelera

git clone https://github.com/axelera-ai-hub/voyager-sdk.git

cd voyager-sdk

This means:

- From WSL:

/mnt/c/Axelera/voyager-sdk - From Windows:

C:\Axelera\voyager-sdk

Native Windows inference

If you need to run inference natively on Windows without WSL, follow these steps. All commands below run in Windows — not in WSL.

Native Windows inference uses the AxRuntime API only. For model deployment, GStreamer pipelines, and all SDK tooling, use WSL2.

Step 1: Install Python

-

Download Python for Windows — use the official website, not the Microsoft Store.

-

During installation:

- Enable "Add Python to PATH"

- Select "Disable path length limit" when prompted

-

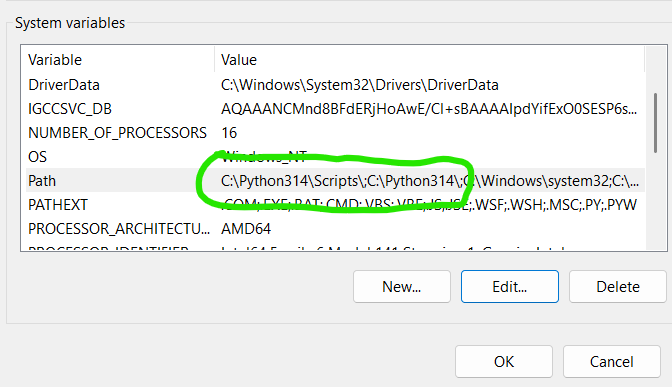

Add the Python installation path and the

Python\Scriptspath to the Windows Path environment variable:

-

Create a virtual environment:

python -m venv venv-win

Step 2: Download Windows packages

Open a regular Command Prompt (not PowerShell, not Administrator) and navigate to your SDK directory:

cd C:\Axelera\voyager-sdk

Create a local download directory and download all packages:

rmdir /s /q windows-packages 2>nul

mkdir windows-packages

cd windows-packages

curl -L -O https://software.axelera.ai/artifactory/axelera-win/packages/1.6.x/axelera-win-device-installer.exe

curl -L -O https://software.axelera.ai/artifactory/axelera-win/packages/1.6.x/axelera-win-runtime-installer.exe

curl -L -O https://software.axelera.ai/artifactory/axelera-win/packages/1.6.x/axelera-win-syslibs-installer.exe

curl -L -O https://software.axelera.ai/artifactory/axelera-win/packages/1.6.x/axelera-win-toolchain-deps-installer.exe

curl -L -O https://software.axelera.ai/artifactory/axelera-win/packages/1.6.x/axelera-win-services-installer.exe

curl -L -O https://software.axelera.ai/artifactory/axelera-runtime-pypi/axelera-runtime/axelera_runtime-1.6.0-py3-none-any.whl

curl -L -O https://software.axelera.ai/artifactory/axelera-runtime-pypi/axelera-types/axelera_types-1.6.0-py3-none-any.whl

curl -L -O https://software.axelera.ai/artifactory/axelera-runtime-pypi/axelera-llm/axelera_llm-1.6.0-py3-none-any.whl

cd ..

Step 3: Install the executable packages

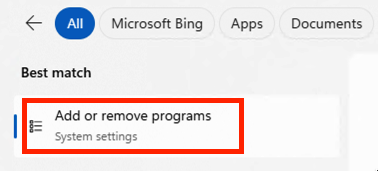

If any previous Axelera packages are installed, uninstall them via Apps & Features before proceeding. After installing all executable packages, close all Command Prompt and PowerShell windows and open a new Command Prompt to continue.

Using Windows Explorer, navigate to C:\Axelera\voyager-sdk\windows-packages and run each .exe installer in sequence.

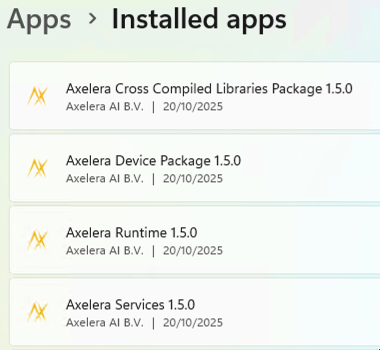

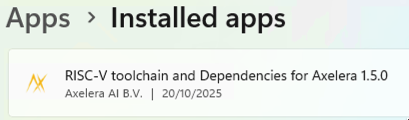

After installation, verify via Settings → Apps & features (or Add or remove programs):

All five packages must be listed:

You must see: Axelera Cross Compiled Libraries Package, Axelera Device Package, Axelera Runtime, Axelera Services, and RISC-V Toolchain and Dependencies for Axelera. Exact version numbers will vary.

Restart your Command Prompt window after all installers complete before proceeding.

Step 4: Install Python packages

Activate the virtual environment and install the wheels:

venv-win\Scripts\activate.bat

pip install windows-packages\axelera_runtime-1.6.0-py3-none-any.whl

pip install windows-packages\axelera_types-1.6.0-py3-none-any.whl

pip install windows-packages\axelera_llm-1.6.0-py3-none-any.whl

Remember to activate the virtual environment each time you open a new Command Prompt. From your SDK directory:

venv-win\Scripts\activate.bat

You'll know it's active when (venv-win) appears at the start of your prompt.

Step 5: Run examples

First, obtain a deployed model. If you completed the WSL2 setup and deployed a model there, it is accessible from Windows at C:\Axelera\voyager-sdk\build\. If not, download a pre-built model:

axdownloadmodel resnet50-imagenet-onnx

The model downloads to C:\Axelera\voyager-sdk\build\.

Run inference using the axrunmodel utility (measures performance):

axrunmodel C:\Axelera\voyager-sdk\build\resnet50-imagenet-onnx\resnet50-imagenet-onnx\1\model.json

This runs the model multiple times and prints runtime statistics.

Run inference using the Python API:

Download an image to C:\Axelera\voyager-sdk\examples\axruntime\images, then:

cd examples\axruntime

python -m axruntime_example -v --aipu-cores 1 --labels imagenet-labels.txt ..\..\build\resnet50-imagenet-onnx\resnet50-imagenet-onnx\1\model.json images

Step 6: Run LLM models

A set of precompiled LLM models can run natively on Windows. To see available models:

axllm --help-network

Run a model in single-prompt mode:

axllm llama-3-2-1b-1024-4core-static --prompt "Give me a joke"

Run interactively in chat mode:

axllm llama-3-2-1b-1024-4core-static

For all options, see the LLM Inference Guide.

Using PowerShell

After completing installation, you can use PowerShell instead of Command Prompt. The only difference is how you activate the virtual environment:

.\venv-win\Scripts\Activate.ps1

Once activated, all the same commands work as in Command Prompt.

If you get an error activating the environment in PowerShell, your execution policy may be blocking unsigned scripts. Check your current policy:

Get-ExecutionPolicy

If needed, set a more permissive policy (run PowerShell as Administrator):

Set-ExecutionPolicy RemoteSigned

Try activating the environment again after changing the policy.

Note: use Command Prompt (not PowerShell) for the installation steps — particularly Step 3 (package installation). PowerShell is fine for running examples and day-to-day use after setup is complete.

Troubleshooting

| Symptom | Fix |

|---|---|

wsl --install fails | Enable "Virtual Machine Platform" in Windows Features |

| WSL shows version 1 | wsl --set-version Ubuntu-22.04 2 |

No Axelera device found in WSL | Install the Windows driver (Step 2), then restart WSL: wsl --shutdown and reopen |

| BitLocker recovery after driver install | You must deactivate BitLocker before running bcdedit — see Step 2a |

| "Test Mode" not showing after bcdedit | Confirm the command ran in an Administrator prompt; restart again |

| PowerShell can't activate venv | Run Set-ExecutionPolicy RemoteSigned as Administrator, then retry |

| Installer fails silently | Uninstall all previous Axelera packages first via Apps & Features |

See also

- SDK Install — the main installation guide (run inside WSL2)

- Verify Setup — confirm hardware and SDK are working

- LLM Inference Guide — full LLM options including Windows

- Glossary: AIPU — the AI Processing Unit on Metis hardware